Come here, kid. Uncle Billie's gonna make ye a man.

Mood:

accident prone

Now Playing: Scoochie Coo - Boyfriend draws laundry marker moustache on girlfriend's baby (raucous laughter)

Topic: Announcements

accident prone

Now Playing: Scoochie Coo - Boyfriend draws laundry marker moustache on girlfriend's baby (raucous laughter)

Topic: Announcements

Dear God it must irk the jerkins out of Microsoft engineering to sit back and watch (literally because I see not one of them breaking ranks. They will all go down in flames together because not two of them make up a whole story and they believed the "besieged meshiah" act MSFT principals are building as a temple to corporate philanthropy made profitable.) while a once proud company is made to look like a blithering fool to avoid getting the socks put on the rolls of quarters Microsoft is about to get hit with. I feel it coming. I can't believe MSFT made a lame attempt to dump on McAuley's reputation in a claims construction! Is that desperation? or stupidity?

What's fair treatment from the bench, do you think?

Microsoft is fielding some pretty swell stuff. Let's just say city level is loud and clear level for the simplicities.

In other words, brother, you're overplaying the part like a hasbeen Burlesque Queen giving the garters another tug and an invitation to coochie coo. Wear a shiny suit. The locals love that kind of stuff.

Anyway, it was bound to happen. As long as Microsoft lawyers run the company (does anybody suspect this is "marketing's" fault? What marketing? It's as though they're embarrassed to have these projects stuffed away in some "knownothing" bunnybunker without a decent codename) you're bound to hit a wall (could engineering be lame enough to actually build this kind of response on purpose? or do we have an engineer trying to sever his arm off with his antique sliderule to get to a payphone to call a journalist) where people simply aren't going to buy the "XML and http is hard" duffus act (Hey Morrie. I need an Alfred E Neumann head looking like Gates.) especially when they see how you hounded the XML standards committees like Pepe' in heat back in the day.

You know the "We own XML" period Microsoft went through - the "We own XML" patent statement that used to be a result on Google but try that string now and the internet is strangely quiet and suddenly devoid of any past Microsoft XML talk.

Suddenly we're Miss Lilly Whitelies of the Easternmost Hamptons. What a crock.

Ray Ozzie. If you can't field anything on the web today, you failed your constituents just as surely as a doctor would hold back a vaccine for sick developeers. How long does it take to work on XML projects and then have the world hear "We don't and never did know $#!@ about no Standards. No Sir." and then realize all you've worked for is on a lawyer's ballpoint and the wrist is wiggled by some nitwit in accounting?

I wonder. I guess I won't get to feel that tug of power. Power comes with permission. Without permission, you're one of the help. Enjoy living in the mansion. Living in a bungalow with some pride has its joys.

.Net isn't supposed to be like that. But it is. In fact, given the current none-state of Microsoft presence in the web world, I would be inclined to buy the "we stink at XML and http and any other standards stuff, like" bit from recent principal as though the whole of Microsoft was unaware there even WAS a set of standards out there to think about, golly gee whiz.

But, then, I remember how every good lawyer would right about now kick in a credible Plan B to curtail discussion of assets or periods of infraction that may have benefitted Microsoft in the way of the "standards" based business they did while VCSY was unable to fairly market what they had and what it could do.

What does that lawyer think VCSY is going to do? Hell, you should take a look at the stack of 2000+ XML hype and bull$#!@ and demos this and whatchadoin hodowns that is stuffed away in the wayback machine alonoe.

Goodness. It now appears Microsoft can't achieve anything remotely capable within the confines of VCSY technology. Read the 6826744 and 7076521 patents first if you're going to take me on on whether I'm shmoozing or telling it in school. These guys are disappointed with Microsoft .Net.

--------------------------------------

“The unfortunate reality is that these are scenarios that we care deeply about but do not fully support in V1.0. I can go into some more detail here. One point to note is that the choice on these features were heavily considered but we had the contention between trying to add more features vs. trying to stay true to our initial goal which was to lay the core foundation for a multiple-release strategy for building out a broader data platform offering. Today, coincidentally, marked the start of our work on the next version of the product and we are determined to address this particular developer community in earnest while still furthering the investment in the overall data platform.”

As Frank Costanza would say "What the hell does that mean?"

This is the subject of the Tester's distaste.

-----------------------------

Now, see there, class? I used the technology of the hypertext to take the user from the string that's underlined. The colors for fresh and loaded hypertext states are available via JAVA scripts.

Plus, the hypertext, once written can be copied and pasted (and the string can be changed to suit your description).

If you get the idea you might be able to tell machines to Do Something Until I Get Back Then Let Me Know what happened with the Something. Where Something is a web application taking care of routing comments from colleagues into folders and distributed for meeting requirements in global reach context.

I know. These underlined words didn't do anything. That's because you can be faked out by a text editor.

HOWDIDOIT?: (I selected the underline tool off this wysiwyg editor [see how you can string together words that stand for websites, resources, computing systems, shopping ecologies, game malls... and more but not really unless you can apply a layer of functionality under that word. Siteflash and MLE allows for putting unlimited resources at that word. It's where you would expect to see traditional procedural technology of the 20th century placed in a situation where it can't put up when told to shut up.] and you can fake out the web user until they won't trust anything you put out after that.)

While Microsoft is acting like it never touched "standards", the rest of the world is marching on. What is today a search textbox or any kind of textbox will before long be offered as a command line able to call ultimate web resources into automation and play for very specific meanings to those words.

That is what an arbitrary framework allows for and that is what 744 is. Not only that, it's a framework for making arbitrary frameworks and etceteras to populate that paradigm.

Think I'm bull$#!@ing? Easy enough to remedy. Try me. Let's talk. I'll let you in on your own special comment line and we can talk about this new architecture and how plain the language is supposed to be. The VCSY lawyers make sense. The Microsoft lawyer sounds just like a lawyer.

It was so nice to read the VCSY brief. It sounds to me like a description from a home country I never visited. Eight long years of waiting to see what McAuley and Davison cooked up should end now.

Microsoft sounded throughout their brief like a muddled legal clerk. They openly contradict their own definitions. They attempt to portray McAuley's understanding of "computer" words as a bumkin's grasp. And that "WebOS" slam was dirty bacon. I wouldn't let these lawyers make my breakfast IF you know what I mean...

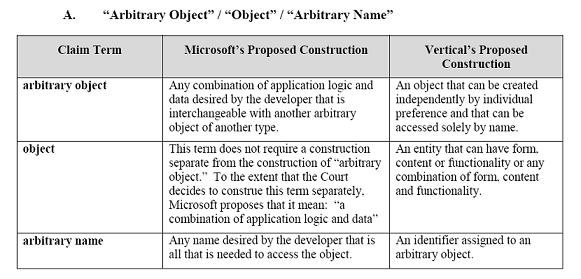

WebOS is the highest achievement of procedural software as evidenced by the work invented in the 90's (not being done now - WebOS is irrelevant). SiteFlash can build on top of WebOS. And the Microsoft lawyers fail to understand what's being said. That's what will hang Microsoft. Well, of course. Theyhave to "understand" it this way. They've convinced themselves their definitions of arbitrary content, form and functionality are going to stand in the face of what VCSY can demonstrate those words mean.

I think the consensus is in. I think you will see MSFT throwing out everything anybody ever said concerning "standards" which is shorthand for XML and http to begin with. How did they do SOAP without XML/http? And why did they send up a sandbag in OOXML? What was in Longhorn that made all the demos run and what was Vista supposed to be? What's the game here? Seriously.

By the way "WebOS"? That's SiteFlash "prior art"? Uh... no. It was research done in the nineties that epitomizes the peak of traditional procedural computing. WebOS is what Siteflash can build as a simple demonstration of Siteflash capabilities; not WebOS capabilities.

It's almost like somebody on the legal team got a "hot tip" Siteflash was also called "WebOS". Well, if they didn't do their due diligence, they got taken. WebOS is something you can build and it can be done with either current procedural tools or with Siteflash. But, with SiteFlash you can bind multiples of these together on any resources. So where does the diagram show that? LOL

Seeing the WebOS diagram in the first Msft responses after the lawsuit was filed is what convinced me Microsoft has a weak case. The VCSY brief confirms my view resulting from reading the Microsoft brief. Obvious attempts to direct the thinking and remove thought processes from context - always a bad sign the speaker is trying to wrestle words to mean something.

The WebOS thing buys nothing but potential disdain and possible sanctions from this Judge who has a reputation for no nonsense. What NIMROD put that in? Whoever did should lose their position.

And if I can see that with no law experience, what will a Judge be able to see?... and say?

At the very least, at the end of the day, MST will have to explain how MSFT's version of this interpretation fairs against VCSY's example. I think allowing positive examples of McAuley's work would be fair recompence to VCSY's standing in the hearings as the court and the defendant were not able to foresee the abrupt and pointless introduction of totally inappropriate material within what was understood by all adult participants to be a claims construction and not a prosecutorial venue nor a witch-hunt.

And when we test Microsoft's entry for meaning, it's either a muffed marketing act (self saboutage? having this be public is suicide for MSFT's image - is somebody blowing the booby hatch to float to the surface in Rolling Stone some time later?) or some engineer is held hostage and this is a scrawled 'help' note in SOAP on a Highway 101 restroom mirror (hell, that could be anything from Fred's Notso Good Taqueria or the latest Condo ecopartments). Oh, and some lipstick on the mirror, too.

MSFT will have to explain how their interpretation doesn't measure up. Why can't MSFT technology even begin talking about the kinds of things allowed by 521/744? The rest of the industry seems to have no problems. It's obvious to me MSFT is held in Lawyerly constraints until the risk of loss is near. Meanwhile Mom's holding the baby so tight to keep it from making a peep, the little tyke is suffocating. Going blue now. Go bye bye.

Meanwhile, the rest of the industry either has some form of permission to test market the claims or they are overtly using the claims as the only way left them to compete with Microsoft's touting of next-generation capabilities they could not deliver on.

Bill. Wake up and straighten up your house before you leave. Finish what you started or be consigned to fulfilling portuno's predictions. And you won't like what portuno can think, and it would all begin and end with those two stupid patents everyone assured everyone would be "taken care" of long long ago.

But, tonight, a more pleasant thought for us all.

What can we think about with 521/744? Ultimately, with 744/521, we're talking building systems that allow any user to treat the "search" textbox as a command line attached to their very own __unlimited__fill_in_the_blank__ computing resources. Want to imagine what that will be like?

Want to bet you won't see that in a Microsoft product any time soon if Microsoft continues to fight?

Remember the first DOS or Unix command line you punched in? Remember what you realized you could do because you knew how to write commands and build pipes and procedures? I remember. My first was on an RCA 1802 development "platform" graduating to CP/M - PL/I - Intel Multibus multi-board processing development tools in an automation shop in a once-household-name factory.

It's all in what you want to do with a computer. If you're the kind of person that wants to take a screwdriver to everything they get so you can feel more "involved" in the process, by all means stay with the desktop paradigm.

But, for those who want less machine between me and thee but can't seem to let go of the lust for power and speed, the oh so personal computing appliance on_always-on cell broadband will always be just a finger flick away nestled in the pocket or purse... whatever you call that thing you stuff things in.

When you've thought of a computer as something you bang on until a prettier model comes along, your thinking is quite dull and rather stunted.

When you get a chance to treat a computer like a closer extension of your way of doing things, you begin a special relationship with the way you think.

And then... sniff sniff, there's the children.. sniff sniff. (In fact, I think I smell diaper right now. But, that could be SnookyWookums here. Yeah, that's right; the ugly kid. Ain't he, though? His Mama's supposed to be right back with the TV Guide.)

Anyway, the children will grow up never knowing what you and I see as "the internet". That archaic concept is going to evolve and absorb into communities of control and mayhem. Some by professionals but most by the same kind of folks who emerged when browsers allowed the average 14 year old kid to build websites for large corporations on a computer in the family garage. Innovators will emerge. They aren't home grown. They collect around ideas. And, if you're not interested in immersing yourself in the culture and the community, you never get the real thrust of ideas.

It's why Microsoft has such a problem coming to the point of realization as they rumble past opportunity after opportunity to reduce damage and slow the engine to be able to make the change without flying to pieces. They don't know the next generation languages or platforms so they don't understand what happens when you can't replicate that behaviour and production. They have be shown and told. And still you have to say it much louder than that.

So they slide past the chance to limit the tragedy like an eighteen wheeler on ice through an intersection. There is no turning before going through the lights. You just hope the lights line up for that instant.

Once the age of procedural languages is shown to have run out of steam never having achieved building a really credible WebOS that could scale in widespread distribution, Microsoft, out of all the industry players, as far as I can see, will have the most to explain should they not be able to use 521 and 744 in public and thus suffer more disappointed MVPs.

The children will work with granular and macro virtualization patterns that can absorb any software components whole or parts into working applications that provide very highly abstracted functionality with any content presented in any format requested.

(Can't do that with Java, Johnny. Leaves a sweat stain on the old Stetson. Sweat don't leave a mark on white as long as the hat's clean.)

Turn off the TV and go to bed Jilly.

But Mama, Darlene wants to watch the rest of Jack Parr. I can't pry her off the floor until Jack Parr is over with.

Now, newly minted generations will be able to take the computer where nobody ever wanted to see it go. Enjoy. And build some neat $#!@. There's literally hell to pay.

Oh, well, enough reminiscing. Sniff sniff. Sorry to drip snot on you there on your back in the crib, SnookyWookums. Just remembering way back when I got into the digital business with thoughts that people were ethical and righteous and wouldn't lay a hand on the deacon's reputation.

Then I finds through life they got their hands on more than the deacon's reputation. I kinda blubbered there for a couple minutes thinking about the old instrumentation gang and how they never did manage to blow themselves up. At least I don't remember reading about it in the paper and... yeah, I would have read about that in the paper.

Old portuno's getting a might long in the bone. Eight long years... delayed and bumped every step of the way. Eight long years.

A lot's changed. The treefort way of seeing things is becoming a dominant view throughout most of the industry but the change is slow, measured and seemingly choreographed. The treefort way of seeing things does not show up in MSFT's current image and it's disappearing from old files about them. Weird, isn't it? Well, let's face it, Google would certainly like to see 521/744 stopped and helping Microsoft would be a good strategic move.

So, a whole lot's changed. Google didn't exist when VCSY brought out the XML Enabler Agent. Hailstorm came and went. It's probably coming around again.

I'm a little more stooped (stupid, get it? now, if I have to explain a joke, how is it a joke?) than usual. Dancing around like an idiot having to discuss the never ending inane crap written by those "skeptics". What drivel. This is certainly been a zen of much rancour. Such self discipline! Such commitment! My ass hurts. But it's more in the image of Abram driving the vultures away from his sacrifice as he waited for the fire to fall from the Almighty.

It ain't entertaining the folks while the popcorn is heating up, don't ya know... They're such ignorant dolts it's a wonder most of them don't drown in their own spittle whilst asleep.

I've changed a lot over the years, Snooky. My hands are bonier. My knees stick out more. Ears bigger. More hair there than where one would want hair there. Creaky bones and a diminished glow, but I'm kicking back and cruising now. Going to let the gardener clean up the back yard now from now on. They know what they're chopping down and what they're trying to nurse. And YOU have a diaper changing coming up. When's your mama coming home?

Dear Ma. Today we discussed the following:

Microsoft was unaware there even WAS a set of standards out there to think about, golly gee whiz.

disappointed with Microsoft .Net.

Tomorrow. The World.

Posted by Portuno Diamo

at 9:20 PM EDT

Updated: Wednesday, 25 June 2008 2:24 AM EDT

P. Douglas :

One thing I would like to know: does the author prefer using web apps over comparable desktop apps? E.g. does the author prefer using a web email app over a desktop email client? Doesn't he realize that most people who use Windows Live Writer, prefer using the desktop client over in-browser editors? Doesn't he realize that most people prefer using MS Office over Google Apps by a gigantic margin? The author needs to compare connected desktop apps (vs. regular desktop apps) to browser apps, to gauge the viability of the former. There is no indication that connected desktop apps are going to fade over time, as they can be far richer, and more versatile than the browser. In fact, these types of apps appear to be growing in popularity.

Besides, who wants to go back to the horrible days of thin client of computing? In those days, users were totally at the mercy of sys admins. They did not have the empowerment that fat PCs brought. I just don't understand why pundits keep pushing for the re-emergence of thin client compputing, when it is fat PCs which democratized computing, and allowed them to write the very criticisms about the PC they are now doing.

Posted by P. Douglas | April 30, 2008 3:50 PM

portuno :

"I just don't understand why pundits keep pushing for the re-emergence of thin client compputing, when it is fat PCs which democratized computing, and allowed them to write the very criticisms about the PC they are now doing."

Because business and consumerism sees the move toward offloading the computing burdens from the client to other resources as a smart move. That's why.

Pundits are only reporting what the trends tell them is happening.

Posted by portuno | April 30, 2008 3:59 PM

P. Douglas :

"Because business and consumerism sees the move toward offloading the computing burdens from the client to other resources as a smart move. That's why."

Why is this a smart move? If the PC can provide apps with far richer interfaces that have more versatile utilities, how is the move to be absolutely dependent on computing resources in the cloud (and an Internet connection) better? It is one thing to augment desktop apps with services to enable users to get the best of both (the desktop and Internet) worlds, it is another thing to forgo all the advantages of the PC, and take several steps back to cloud computing of old (the mainframe). Quite frankly, if we kept on pursuing cloud computing from the 70s, there would be no consumer market for computing, and the few who would 'enjoy' it, would probably be confined to manipulating text data on green screen monitors.

"Pundits are only reporting what the trends tell them is happening."

Pundits are ignoring the trends towards connected desktop applications (away from regular desktop apps) which is proving to be more appealing than regular desktop apps and browser based apps.

Posted by P. Douglas | April 30, 2008 4:21 PM

portuno :

Why is this a smart move?

"If the PC can provide apps with far richer interfaces that have more versatile utilities, how is the move to be absolutely dependent on computing resources in the cloud (and an Internet connection) better?"

The PC can't provide apps with richer processing. The interfaces SHOULD be on the client, but, the processing resources needed to address any particular problem does not need to be on the client.

The kind of processing that can be done on a client doesn't need the entire library of functions available on the client.

If your hardware could bring in processing capabilities as they became necessary, the infrastructural footprint would be much smaller.

The amount of juggling the kernel would have to do to keep all things computational ready for just that moment when you might want to fold a protein or run an explosives simulation, would be reduced to the things the user really wants and uses.

An OS like Vista carries far too much burden in terms of memory used and processing speeds needed. THAT is the problem and THAT is why Vista will become the poster child for dividing up content and format and putting that on the client with whatever functionality is appropriate for local computing.

This isn't your grandfather's thin client.

"It is one thing to augment desktop apps with services to enable users to get the best of both (the desktop and Internet) worlds, it is another thing to forgo all the advantages of the PC, and take several steps back to cloud computing of old (the mainframe)."

Why does everyone always expect the extremes whenever they confront the oncoming wave of a disruption event? What is being made available is the proper delegation of processing power and resource burden.

You rightly care about a fast user interface experience. But, you assume the local client is always the best place to do the processing of the content that your UI is formatting.

The amount of processing necessary to accomplish building or providing the content that will be displayed by your formatting resources can be small or large. It is better to balance your checkbook on your client. It is better to fold a protein on a server, then pass the necessary interface data and you get to see how the protein folding is done in only a few megabytes... instead of terabytes.

"Quite frankly, if we kept on pursuing cloud computing from the 70s, there would be no consumer market for computing, and the few who would 'enjoy' it, would probably be confined to manipulating text data on green screen monitors."

We couldn't continue mainframing from that time because there was not a ubiquitous transport able to pass the kind of interface data needed outside of the corporate infrastructure.

Local PCs gave small businesses the ability to get the computing power in their mainframe sessions locally. And, until Windows, we had exactly that thin client experience on the "PC".

Windows gave us an improved "experience" but at the cost of a race in keeping hardware current with a kind of planned obsolescence schedule.

We are STILL chasing the "experience" on computers that can do everything else BUT formatting content well is STILL being chased - it's why "Glass" is the key improvement in Vista, is it not? It's why the "ribbon" is an "enhancement" and not just another effort to pack more functionality into an application interface...

THE INTERFACE. Not the computing. The interface; a particular amount of content formatted and displayed. Functionality is what the computer actually does when you press that pretty button or sweep over that pretty video.

Mainframes that are thirty years old connected to a beautiful modern interface can make modern thin client stations sing... and THAT is what everyone has missed in this entire equation.

Web platforming allows a modernization of legacy hardware AND legacy software without having to touch the client. When you understand how that happens, you will quickly see precisely what the pundits are seeing. That's why I said: 'Pundits are only reporting what the trends tell them is happening.'

"Pundits are ignoring the trends towards connected desktop applications (away from regular desktop apps) which is proving to be more appealing than regular desktop apps and browser based apps."

Do you know WHY "Pundits are ignoring the trends towards connected desktop applications"? Because there aren't any you can get to across the internet! At least until very recently.

If you're on your corporate intranet, fine. But, tell me please, just how many "connected desktop applications" there are? Microsoft certainly has little and THAT's even on their own network protocols.

THAT is what's ridiculous.

XML allows applications to connect. Microsoft invented SOAP to do it (and SOAP is an RPC system using XML as the conduit) and they can't do that very well. Only on the most stable and private networks.

DO IT ON THE INTERNET and the world might respect MSFT.

The result of Microsoft not being on the internet is their own operating system is being forced into islandhood and the rest of the industry takes the internet as their territory.

It's an architectural thing and there's no getting around those. It's the same thing you get when you build a highway interchange. It's set in concrete and that's the way the cars are going to have to go, so get used to it.

Lamenting the death of a dinosaur is always unbecoming. IBM did it when The Mainframe met the end of its limits in throughput and and reach. The PC applied what the mainframe could do on the desk.

Now, you need a desktop with literally the computing power of many not-so-old mainframes to send email, shop for shoes, and write letters to granny. Who's idea of proper usage is this? Those who want a megalith to prop up their monopoly.

The world wants different.

Since there are broadband leaps being carved out in the telecommunications industry, the server can do much more with what we all really want to do than a costly stranded processor unable to reach out and touch even those of its own kind much less the rest of the world's applications.

The mentality is technological bunkerism and is what happens in the later stages of disruption. It took years for this to play out on IBM.

It's taken only six months to play out on Microsoft and it's only just begun. We haven't even reached the tipping point and we can see the effect accelerating from week to week.

It's due to the nature of the media through which the change is happening. With PC's the adoption period was years. With internet services and applications, the adoption period is extremely fast.

Posted by portuno | April 30, 2008 11:55 PM

P. Douglas :

"The kind of processing that can be done on a client doesn't need the entire library of functions available on the client.

If your hardware could bring in processing capabilities as they became necessary, the infrastructural footprint would be much smaller.

The amount of juggling the kernel would have to do to keep all things computational ready for just that moment when you might want to fold a protein or run an explosives simulation, would be reduced to the things the user really wants and uses."

How then do you expect to work offline? I have nothing against augmenting local processing with cloud processing, but part of the appeal of the client is being able to do substantial work offline during no connection or imperfect / limited network / Internet connection scenarios. Believe me, for most people, limited network / Internet connection scenarios occur all the time. Also, the software + software services architecture minimizes bandwidth demands allowing more applications and more people to benefit from an Internet connection at a particular node. In other words, the above architecture is much more efficient than a dumb terminal architecture, or the one that you are advocating. This means that e.g. in a scenario where you have a movie being downloaded to your Xbox 360, several podcasts automatically being downloaded to your iTunes or Zune client software, your TV schedule being updated in Media Center, your using a browser, etc., and the above being multiplied for several users and several devices at a particular Internet connection, the software + software services architecture is seen to be far better and more practical than a dumb terminal architecture.

Posted by P. Douglas | May 1, 2008 8:04 AM

portuno :

@ P. Douglas,

"How then do you expect to work offline?"

Offline work can be done by a kernel dedicated to the kind of work needed at the time. In other words, instead of a megalith kernel (Vistas is 200MB+) running all functions, you place a kernel (an agent can be ~400KB) optimized for the specific kind of work to tbe done. This kernel can be very small (because it won't be doing ALL processing - only the processing necessary for the tasks selected - it can be only one of multiple kernels interconnected for state determinism) and the resources available online or offline (downloaded when the task is selected).

The big "bugaboo" during the AJAX development efforts in 2005 and 2006 was "how do you work offline"? The agent method places an operational kernel on the client which is a mirror (if necessary) of the processing capability on the remote server. When the system is "online", the kernel cooperates with the server for tasking and processing. When the client is "offline", the local agent does the work, then synchs up the local state with the server when online returns.

No online-offline bugaboo. Just a proper architecture. That's what was needed and AJAX doesn't provide that processing capability. All AJAX was originally intended to do was to reduce that latency between client button push, server response and client update..

"...part of the appeal of the client is being able to do substantial work offline during no connection or imperfect / limited network / Internet connection scenarios."

Correct. And you don't need a megalithic operating system to do that. What you DO need is an architecture that's fitted to take care of both kinds of processing with the most efficient resources AT THE TIME. Not packaged and lugged around waiting for the moment.

"...limited network / Internet connection scenarios occur all the time."

Agreed. So the traditional solution is to load everything that may ever be used on the client? Why don't we use that on-line time to pre-process what can be done and load the client with post processing that is most likely for that task set?

"Also, the software + software services architecture minimizes bandwidth demands allowing more applications and more people to benefit from an Internet connection at a particular node. In other words, the above architecture is much more efficient than a dumb terminal architecture, or the one that you are advocating."

"More efficient" at the cost of much larger demands on local computing resources. Much larger demands on memory (both storage and runtime). Much larger demands on processor speed (the chip has to run the background load of the OS plus any additional support apps running to care for the larger processing load you've accepted).

You will find there will be no "dumb terminals" in the new age. A mix of resources is what the next age requires and a prejudice against a system that was limited by communications constraints 20 years ago doesn't address the problems brought forward by crammed clients.

"This means that e.g. in a scenario where you have a movie being downloaded to your Xbox 360, several podcasts automatically being downloaded to your iTunes or Zune client software, your TV schedule being updated in Media Center, your using a browser, etc., and the above being multiplied for several users and several devices at a particular Internet connection, the software + software services architecture is seen to be far better and more practical than a dumb terminal architecture."

At a much higher cost in hardware, software, maintenance and governance.

Companies are not going to accept your argument when a fit client method is available. The fat client days are spelled out by economics and usefulness.

Because applications can't interoperate (Microsoft's own Office XML format defies interoperation for Microsoft - how is the rest of the world supposed to interoperate?) they are limited in what pre-processing, parallel processing or component processing can be done. The only model most users have any experience with is the fat client model... and the inefficiencies of that model are precisely what all the complaining is about today.

Instead of trying to justify that out-moded model, the industry is accepting a proper mix of capabilities and Microsoft has to face the fact (along with Apple and Linux) that a very large part of their user base can get along just fine with a much more efficient, effective and economical model - being either thin client or fit client.

It's a done deal and the fat client people chose to argue the issues far too late because the megaliths that advocate fat client to maintain their monopolies and legacies no longer have a compelling story.

The remote resources and offloaded burdens tell a much more desirable story.

People listen.

Posted by portuno | May 1, 2008 12:07 PM

P. Douglas :

"Offline work can be done by a kernel dedicated to the kind of work needed at the time. In other words, instead of a megalith kernel (Vistas is 200MB+) running all functions, you place a kernel (an agent can be ~400KB) optimized for the specific kind of work to tbe done. This kernel can be very small (because it won't be doing ALL processing - only the processing necessary for the tasks selected - it can be only one of multiple kernels interconnected for state determinism) and the resources available online or offline (downloaded when the task is selected)."

I don't quite understand what you are saying. Are you saying computers should come with multiple, small, dedicated Operating Systems (OSs)? What do you do then when a user wants to run an application that uses a range of resources spanning the services provided by these multiple OSs? Do you understand the headache this will cause developers? Instead of having to deal with a single coherent set of APIs, they will have deal with multiple overlapping APIs? Also it seems to me that if an application spans multiple OSs, there will be significant latency issues. E.g. if OS A is servicing 3 applications, and one of the applications (App 2) is being serviced by OS B, App 2 will have to wait until OS A is finished servicing requests made by 2 other applications. What you are suggesting would result in unnecessary complexity, and would wind up being overall more resource intensive than a general purpose OS - like the kinds you find in Windows, Mac, and Linux.

"The agent method places an operational kernel on the client which is a mirror (if necessary) of the processing capability on the remote server. When the system is "online", the kernel cooperates with the server for tasking and processing. When the client is "offline", the local agent does the work, then synchs up the local state with the server when online returns."

The software + services architecture is better because: of the reasons I indicated above; a user can reliably do his work on the client (i.e. he is not at the mercy of an Internet connection); data can be synched up just like in your model.

""More efficient" at the cost of much larger demands on local computing resources. Much larger demands on memory (both storage and runtime). Much larger demands on processor speed (the chip has to run the background load of the OS plus any additional support apps running to care for the larger processing load you've accepted)."

Local computing resources are cheap enough and are far more dependable than the bandwidth requirements under your architecture.

"At a much higher cost in hardware, software, maintenance and governance.

Companies are not going to accept your argument when a fit client method is available. The fat client days are spelled out by economics and usefulness."

Thin client advocates have been saying this for decades. The market has replied that the empowerment, and versatility advantages of the PC, outweigh whatever maintenance savings there are in thin client solutions. In other words, it is a user's overall productivity which matters (given the resources he has), and users are overall much more productive and satisfied with PCs, than they are with thin clients.

Posted by P. Douglas | May 1, 2008 2:27 PM

portuno :

P. Douglas:

"I don't quite understand what you are saying. Are you saying computers should come with multiple, small, dedicated Operating Systems (OSs)? "

What would you think Windows 7 will be? More of the same aggregated functionality packaged into a shrinkwrapped package? Would you not make the OS an assembly of interoperable components that could be distributed and deployed when and where needed, freeing the user's machine to use the hardware resources for the user experience rather than as a hot box for holding every dll ever made?

"unnecessary complexity"????

Explain to me how a single OS instance running many threads is less complex than multiple OS functions running their own single threads and passing results and state to downstream (or upstream if you need recursion) processes.

What I've just described is a fundmental structure in higher end operating systems for mainframes. IBM is replacing a system with thousands of servers with only 33 mainframes. What do you think is going on inside those mainframes? And why can't that kind of process work just as well in a single client or a client connected to a server or a client connected to many servers AND many clients fashioned into an ad hoc supercomputer for the period needed?

"Thin client advocates have been saying this for decades."

The most dangerous thing to say is "this road has been this way for years" and driving into the future with your eyes closed.

If your position were correct, we would never be having this conversation. But, we ARE having this conversation because the industry is moving forward and upward and leaving behind those who say "...advocates have been saying this for decades...".

Yada Yada Yada

Posted by portuno | May 1, 2008 3:48 PM